Most marketing insights are not reproducible. They depend on who is analyzing the data.

Analysts often interpret the same campaign performance differently, producing inconsistent conclusions from identical inputs.

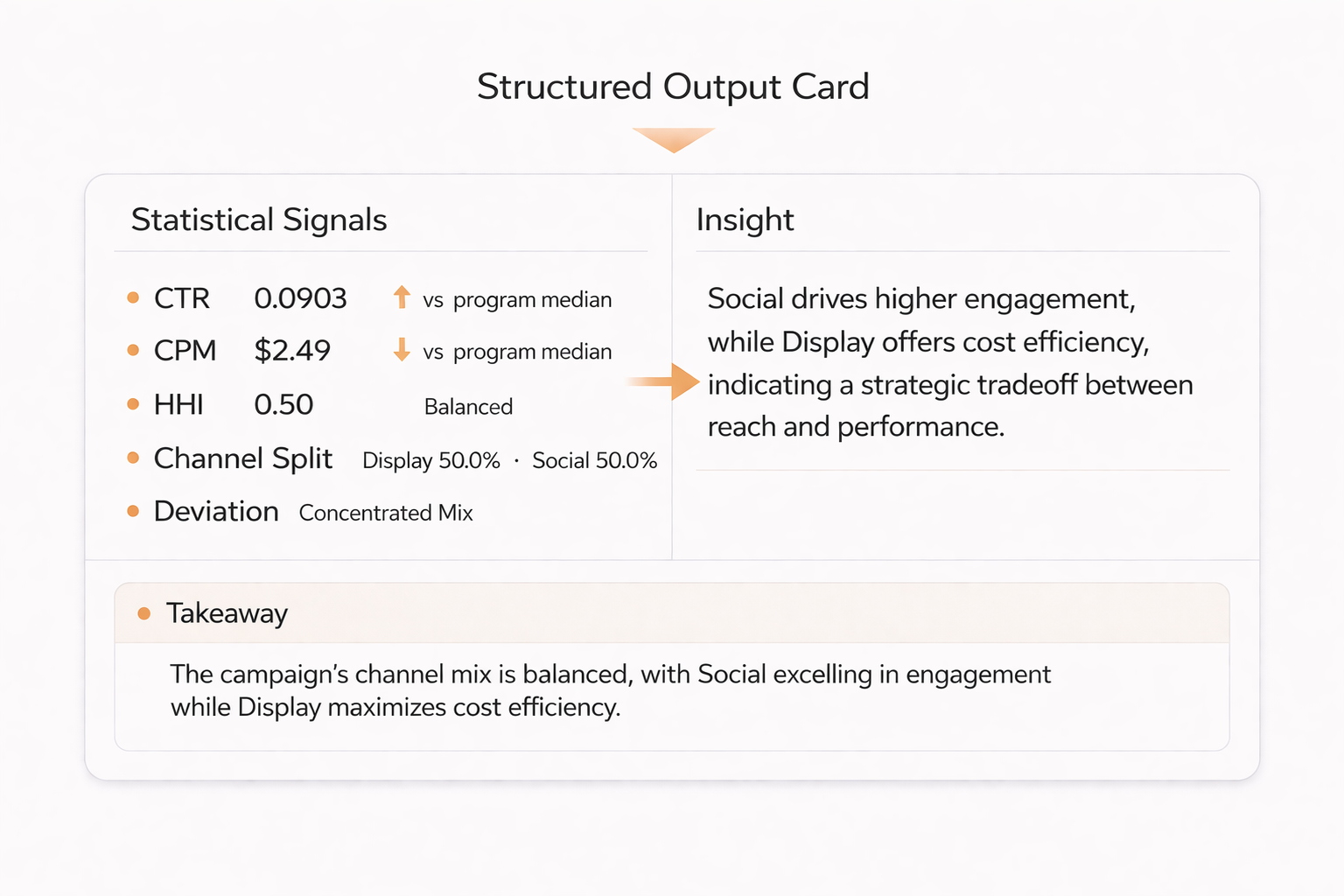

This system formalizes what counts as an insight by separating deterministic signal computation from constrained LLM interpretation.

Overview

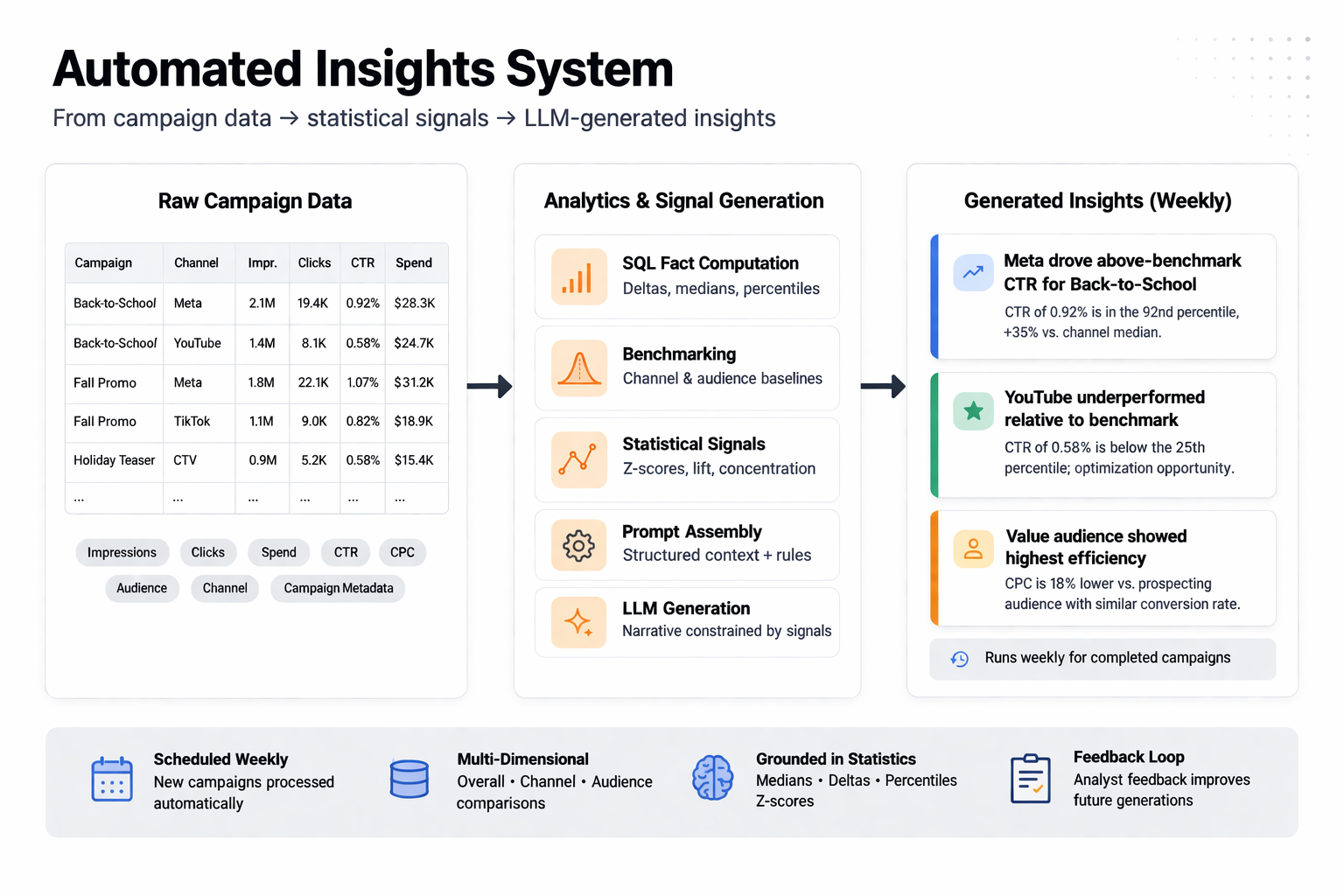

This system generates actionable insights from historical marketing campaign data by using a client’s own performance as the benchmark.

Computation owns the signals; the model interprets them, keeping insights traceable end to end.

Instead of relying on external comparisons, the system evaluates campaigns relative to historical baselines, surfacing meaningful deviations, trends, and opportunities.

I designed and implemented an end-to-end analytical pipeline that defines, detects, and interprets insights through deterministic statistical signals paired with structured LLM reasoning.

The result is a repeatable system that replaces subjective analysis with consistent, data-grounded outputs.

Role

Sole Engineer (Analytics Framework + LLM System Design)

- Defined the analytical framework for identifying meaningful insights from campaign data

- Designed and implemented SQL-based benchmarking and anomaly detection logic

- Developed structured prompt systems to interpret signals within campaign context

- Built automated pipelines for recurring insight generation and delivery

The system operates as a layered analytical pipeline where structured statistical computation precedes and constrains all language generation.

What I Built

I designed a system that formalizes insight generation by combining statistical benchmarking with structured LLM interpretation.

Deterministic signals are computed first; the LLM interprets structured inputs without performing the analysis itself.

This included:

- Defining what constitutes an “insight” through measurable deviations in historical campaign performance

- Designing a measurement and benchmarking framework that maps metrics to campaign objectives and evaluates performance against historical baselines

- Detecting anomalies and statistically significant variations in campaign performance

- Designing prompt architectures that enforce context-aware interpretation aligned with campaign objectives

- Structuring a stats JSON schema as the sole contract between computation and interpretation layers

- Enforcing structured outputs to ensure consistency, traceability, and downstream usability

- Automating recurring pipelines to generate insights for newly completed campaigns

The system ensures all reasoning is grounded in precomputed signals, with the LLM restricted to interpretation rather than computation.

System Design

The architecture is built around a strict separation of concerns:

-

Computation Layer (SQL) Computes all metrics, benchmarks, and comparative signals deterministically

-

Interpretation Layer (LLM) Consumes structured inputs and generates narrative insights without performing calculations

Reliability comes from structure, not from the model.

This separation enforces:

- Elimination of LLM-driven numerical reasoning

- Consistent and reproducible outputs

- Controlled variability through prompt constraints

The structured JSON layer provides observability, validation, and debuggability, making insight generation transparent and auditable.

Technical Constraints

A key challenge was replicating human judgment while maintaining consistency and explainability.

Insight generation is inherently subjective, requiring systems to distinguish meaningful signals from noise.

Early iterations revealed risks of over-interpretation and inconsistent reasoning when model outputs were insufficiently constrained.

To address this:

- Statistical signals were filtered and validated before reaching the model

- Prompts were structured to enforce context-aware interpretation tied to campaign objectives

- Outputs were constrained to reflect explicit relationships between metrics

This ensured insights remained both actionable and defensible in production use.

Technical Focus

The system is built around:

- Formalizing subjective analytical workflows into deterministic frameworks

- Statistical benchmarking and anomaly detection across campaign histories

- Structured LLM reasoning constrained by analytical and business context

- Automated pipelines for recurring insight generation

At its core, the system demonstrates how controlled reasoning over structured data enables scalable, consistent analytics.

Outcome

The system replaces manual post-campaign analysis with automated, structured insight generation.

Instead of relying on individual interpretation, teams receive consistent outputs grounded in statistically derived benchmarks.

- Reduced analyst effort required to identify campaign insights

- Improved consistency in how insights are defined and communicated

- Enabled scalable insight generation across recurring campaign cycles

- Validated internally, with analytics leadership endorsing output quality

The result is a foundation for data-grounded, scalable insight generation across clients and marketing workflows.